Featured Topics

Featured Products

Events

S&P Global Offerings

Featured Topics

Featured Products

Events

S&P Global Offerings

Featured Topics

Featured Products

Events

S&P Global Offerings

Featured Topics

Featured Products

Events

Financial and Market intelligence

Fundamental & Alternative Datasets

Government & Defense

Professional Services

Banking & Capital Markets

Economy & Finance

Energy & Commodities

Technology & Innovation

Podcasts & Newsletters

Financial and Market intelligence

Fundamental & Alternative Datasets

Government & Defense

Professional Services

Banking & Capital Markets

Economy & Finance

Energy & Commodities

Technology & Innovation

Podcasts & Newsletters

Research — March 25, 2026

Micron Technology Inc. (NASDAQ: MU) delivered a decisive beat in its fiscal second quarter, driven by the strength of the AI-driven memory cycle.

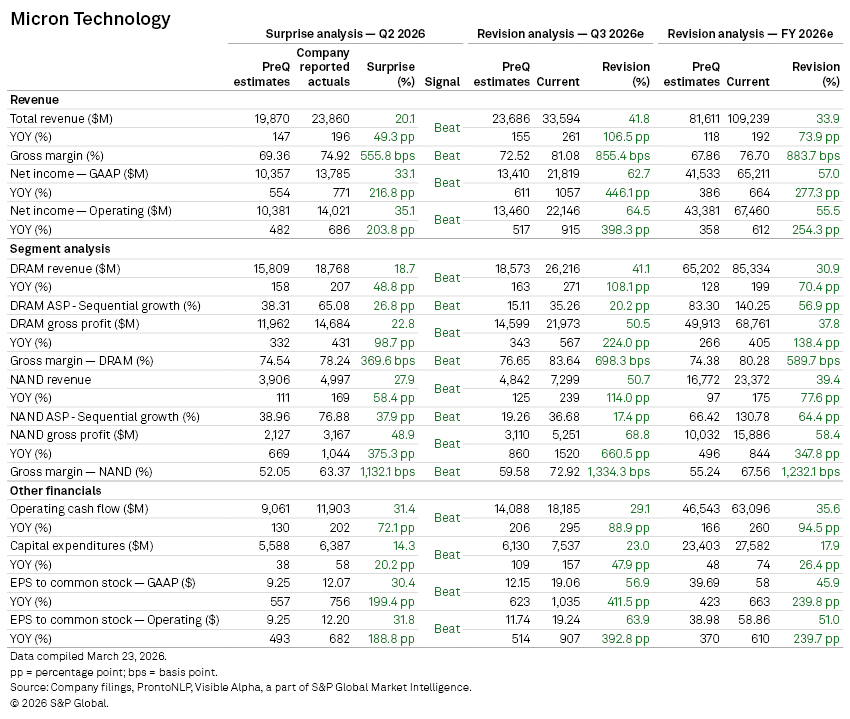

Revenue rose to $23.86 billion in Q2 2026, while adjusted earnings per share reached $12.20, comfortably ahead of Visible Alpha consensus expectations.

The company’s forward guidance also exceeded expectations, reinforcing the view that demand linked to AI infrastructure remains robust.

Analysts have responded by sharply revising up near-term forecasts. Consensus estimates for Micron’s fiscal third quarter now point to materially higher revenues and profitability across both DRAM and NAND segments, reflecting sustained pricing momentum and tight supply conditions.

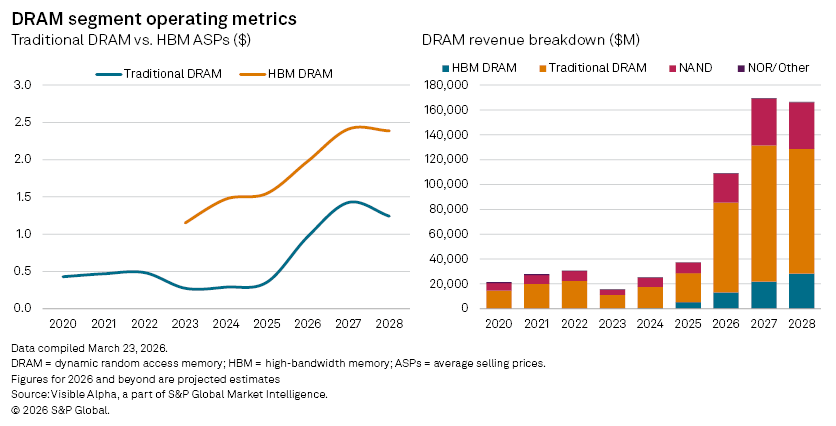

The most pronounced upgrades are concentrated in DRAM, where Micron has significant exposure to AI-related demand. Q3 DRAM revenues are now projected to be 41% higher than pre-earnings expectations, with full-year 2026 estimates up 31%. Gross profit forecasts have been revised even more aggressively, capturing both improved pricing and a richer product mix. As a result, consensus earnings for fiscal 2026 have climbed to roughly $58 per share.

High-bandwidth memory (HBM), a critical enabler of AI workloads, remains a central driver. Q3 HBM revenue estimates have risen to $3.7 billion, up from $3.1 billion prior to the results, while full-year projections have increased to $13.2 billion from $11.7 billion.

HBM plays an important role in ensuring AI workloads run efficiently and fast, reducing latency. HBM increases performance by stacking traditional DRAM layers vertically, while decreasing the amount of power consumed. As hyperscale data center investment accelerates, memory is emerging as a critical bottleneck and a source of pricing power.

The resulting supply-demand imbalance has driven a sharp upswing in memory pricing across the industry, benefiting Micron.

This article was published by Visible Alpha, part of S&P Global Market Intelligence and not by S&P Global Ratings, which is a separately managed division of S&P Global.

Content Type

Theme

Products & Offerings

Segment