Featured Topics

Featured Products

Events

S&P Global Offerings

Featured Topics

Featured Products

Events

S&P Global Offerings

Featured Topics

Featured Products

Events

S&P Global Offerings

Featured Topics

Featured Products

Events

Financial and Market intelligence

Fundamental & Alternative Datasets

Government & Defense

Professional Services

Banking & Capital Markets

Economy & Finance

Energy & Commodities

Technology & Innovation

Podcasts & Newsletters

Financial and Market intelligence

Fundamental & Alternative Datasets

Government & Defense

Professional Services

Banking & Capital Markets

Economy & Finance

Energy & Commodities

Technology & Innovation

Podcasts & Newsletters

Research — March 13, 2026

By Jean Atelsek

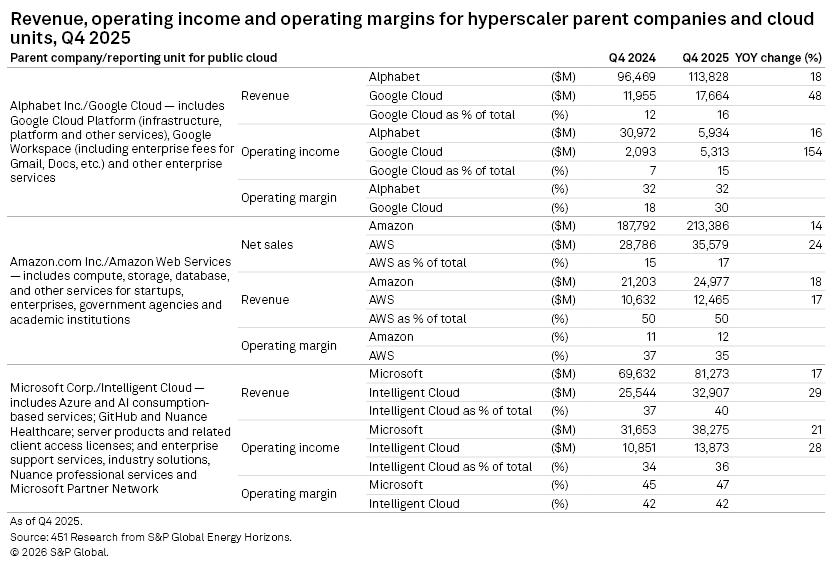

AI services and record capital outlays again dominated the latest round of quarterly earnings calls from Alphabet Inc., Amazon.com Inc. and Microsoft Corp., but market analysts cannot yet draw a line between AI investments and appreciable returns. No matter: The providers themselves are reaping benefits, and commercial and captive demand for AI capacity continues to exceed available supply. Officials at all three companies report that customers running AI workloads are consuming more core services as well. While inferencing is ultimately expected to be a bigger source of revenue than model training, it is not cheap either.

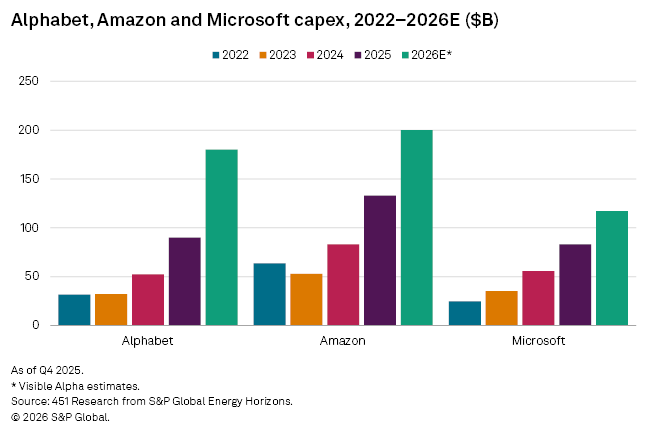

During their fourth-quarter 2025 earnings calls, Alphabet, Amazon and Microsoft collectively put their capex projections for 2026 — much of it for technical infrastructure including densely packed AI data centers — at a whopping $495 billion, up 61% from 2025 and six times the 2020 level. Even with record sales, capital spending is outpacing revenues and pushing the vendors to pursue debt financing. While Wall Street analysts are growing impatient for evidence that these investments are paying off, the providers are playing a long game, having experienced substantial AI returns in their internal operations and strong demand from enterprise customers. To help protect future margins, all three vendors are shifting more activity toward proprietary AI models and hardware accelerators. Inferencing is expected to dominate future AI activity — while not as resource-intensive as model training, the costs are significant.

Amazon, Alphabet and Microsoft see internal returns on their AI spending

The parent companies of Amazon Web Services Inc., Azure and Google Cloud are finding returns on their AI investments in their own backyards. While these may not appear in their cloud unit results, the positives are justifying the boom in spending needed to bring more AI services to market. Amazon CEO Andy Jassy, for instance, noted that customers using Rufus (Amazon's shopping agent) are 60% more likely to complete a purchase on the site. And Alphabet CEO Sundar Pichai cited the advantage of deploying the company's Gemini models to improve the product experience across Google Search, YouTube LLC and other properties. This is in addition to improved developer velocity thanks to AI-powered coding assistants. Alphabet CFO Anat Ashkenazi observed that using AI within the finance team — including using agents to help reconcile charges and pay invoices — makes it possible to do more within the current IT footprint and frees up resources to invest in growth.

Custom silicon and proprietary models strengthen vertical integration of hyperscaler AI stacks

In an ecosystem largely dominated by NVIDIA Corp. and Advanced Micro Devices Inc. for chips and by Anthropic PBC and OpenAI LLC for models, the parent companies of AWS, Azure and Google Cloud are leaning in on the differentiation of custom silicon and purpose-built large language models (LLMs).

During Microsoft's fourth-quarter 2025 earnings call, CEO Satya Nadella touted the performance and cost-efficiency of the company's second-generation Maia 200 accelerator for inferencing; the chip's rollout in January followed the company's August 2025 introduction of MAI-1-preview (an LLM designed to follow instructions and answer queries) and MAI-Voice-1 (a speech generation model). Likewise, he noted that the company's Cobalt 200 CPU, introduced in November 2025, offers a 50% performance boost versus the first-generation version. Microsoft is pursuing an up-the-stack approach to AI profitability, so these launches serve a dual purpose: reducing its dependence on third-party suppliers and designing components specifically to power its suite of agentic Copilots. Nadella noted that Maia 200 deployments would scale first with the company's Superintelligence Team, launched in November 2025, as well as for Copilot and third-party inferencing applications.

In addition to plugging Google's Gemini family of LLMs, which underpin the company's AI-powered Search, YouTube, Workspace, Maps and other services, Pichai highlighted the January launch of Project Genie and the 11-billion-parameter Genie 3, Google DeepMind's "world model" that generates interactive and physically consistent digital environments from text prompts; likely applications include game prototyping and robotics training, among many others. On the silicon front, Google has had its own AI accelerator, the Tensor Processing Unit (TPU), since 2015 — it is now in its 7th generation.

AWS introduced Graviton CPUs in 2018 as a more price-performant alternative to x86 processors; at AWS re:Invent in December 2025, the company announced the 5th generation of Gravitons as well as the Trainium3 GPU, which is being developed for use in both training and inferencing applications, apparently subsuming the functionality of AWS' earlier Inferentia chip. In his earnings call comments, Jassy noted the Trainium line is a multibillion-dollar annualized revenue run rate business, thanks in large part to Anthropic's commitment to use 500,000 of the processors to train its Claude AI models (Anthropic has made similar pledges to Google and Microsoft). On the LLM front, Amazon offers a suite of Nova models, including Nova Forge, which lets customers blend proprietary data with Nova training data to create customized models.

Vertical integration has advantages for these vendors — it streamlines supply chains (minimizing the risk of shortages), allows for faster innovation and tighter integration, and lets them capture margin that would otherwise go to outside suppliers. It also requires investment in R&D and specialized talent and increases lock-in risk for customers. With their growing capex projections, all three companies are full steam ahead on building proprietary advantage for the AI transition.

How much does it cost to build and run a 1-gigawatt AI data center?

Microsoft's Nadella and AWS' Jassy called out fourth-quarter 2025 additions of 1 gigawatt or more to their capacity, expressing it not in terms of servers or chips but in terms of power, which is the primary resource constraint at this point. A gigawatt of power, considered a baseline for training next-generation AI models, is said to be enough energy for 750,000 US homes, so it is no wonder that investors want evidence of returns (and utility ratepayers are pushing back). Nadella noted that Microsoft is using tokens per watt per dollar as a key metric for assessing the company's optimization efforts, which will rely on more efficient silicon, higher utilization and software innovation.

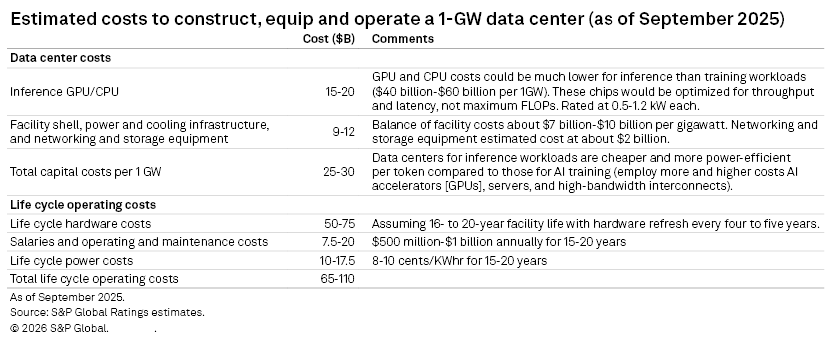

What does it cost to bring a 1GW facility online? In Industry Credit Outlook: Despite the Risks, Tech, Power and Data Center Companies Are Going All-In on Their AI Gamble, S&P Global Ratings documents the impact of AI on data center and power demand. While AI model training is seen as the predominant use case today, ultimately AI inferencing — which is less computationally intense than training but requires greater connectivity and lower latency to deliver output to end customers — is expected to represent the dominant application by the end of the decade. The table below shows the report's estimate of the total cost of building and operating an inferencing data center over a 15- to 20-year span.

With capital costs estimated at $25 billion to $30 billion per gigawatt, it is easy to see why hyperscaler capex is exploding as the companies race to build facilities that will serve a generation of AI-driven applications. One caveat: the above estimates do not include the cost of application-specific integrated chips (ASICs), which tend to cost more up front than general-purpose GPUs but are designed for more cost-effective operation. ASICs are expected to be a big part of the inference stack, not just CPUs and GPUs. The NVIDIA-Groq acquihire and OpenAI's recently announced partnership with Cerebras support this.

451 Research from S&P Global Energy Horizons provides technology industry research, data, and advisory solutions. For more information or to contact us, please visit 451 Research.

This article was published by S&P Global Market Intelligence and not by S&P Global Ratings, which is a separately managed division of S&P Global.

Content Type

Language