Featured Topics

Featured Products

Events

S&P Global Offerings

Featured Topics

Featured Products

Events

S&P Global Offerings

Featured Topics

Featured Products

Events

S&P Global Offerings

Featured Topics

Featured Products

Events

Financial and Market intelligence

Fundamental & Alternative Datasets

Government & Defense

Professional Services

Banking & Capital Markets

Economy & Finance

Energy & Commodities

Technology & Innovation

Podcasts & Newsletters

Financial and Market intelligence

Fundamental & Alternative Datasets

Government & Defense

Professional Services

Banking & Capital Markets

Economy & Finance

Energy & Commodities

Technology & Innovation

Podcasts & Newsletters

Research — April 8, 2026

Large language models are predominantly defined by their training data. Training data has mostly been garnered from the internet — an internet that is now being skewed by more AI-generated content in a constant feedback loop. As such, differentiated outcomes with an off-the-shelf model are often determined by data that can be provided only by the user. For businesses, this has created a race to lock down IP and AI infrastructure so that proprietary data isn't fed into the voracious training loop of models. Consumers, however, are becoming the often-unwitting data "donors" and test subjects of this AI Wild West.

The privacy implications of this shift are potentially huge as a large swath of consumers adopt GenAI. Often, this adoption isn't noticed as technology products and services build in GenAI-driven features for consumers and quietly update their terms of service, further muddying the ethics associated with digital consent.

It is not far-fetched to imagine a world with two distinct classes of GenAI tool users: professionals in the business sphere, who benefit from strong data architecture and legal data protection mechanisms, versus mainstream consumers, who are essentially treated as sources of new data for model training. Most businesses are doing all they can to protect proprietary and sensitive data from models, while consumers rarely have equivalent opportunities. This dichotomy could drive a wedge into the subjective experience of using AI. Consent and preference methodology has always been a challenge within digital channels, but the pace of evolution of AI-enabled technology has created deeper problems. Consumers largely couldn't keep up with privacy policies or terms of service before, and are unlikely to be able to moving forward. Enterprises may benefit as they continue to protect their most sensitive data while benefiting from the exact models that are fed and trained on information from consumers.

Is data privacy really 'dead?'

Our concept of personal privacy is relatively nascent in recorded human history and is legally codified in vastly different ways across global jurisdictions. Privacy threats, as we generally understand them, are defined by access to, control of and usage (or misuse) of personal information — digital or otherwise. Historically, it was difficult for parties outside of an individual's immediate social circle to obtain, document, integrate, verify and systematically abuse information about others on a massive scale. Gossip was often the biggest threat to privacy. The world is radically different today, as data collection and access are increasingly industrialized and therefore beyond individual control.

Yet moral panic over privacy is not new on a contemporary timeline. A seminal article in Harvard Law Review — "The Right to Privacy" — was published in 1890 by Samuel D. Warren and future US Supreme Court justice Louis D. Brandeis. The article was inspired by an emergent technology that had alarmed the public via its ability to record and circulate private moments captured surreptitiously amid public life: the camera. Over 120 years later, in the same Harvard journal, the article "What Privacy is For" made waves amid public privacy and surveillance revelations associated with the US government that were rattling the Western economic sphere. While many reasoned at the time that personal privacy only mattered if you had "something to hide," the article argued against this fallacy, underscoring the structural role that personal privacy plays in supporting liberal democracy and the continued ability to innovate.

The narrative of privacy being "dead" is nothing new. Yet it continues to repeat, because people do care about privacy, especially as technology evolves in new and unexpected ways.

Power dynamics in the race to use AI

Organizations, meanwhile, have ample motivation to protect their sensitive and proprietary business data from being fed into off-the-shelf models that may benefit competitors or peers. Once data is used to train an LLM, it is widely understood that the data is functionally impossible to "claw back" or fully eliminate. Adversarial techniques, such as prompt injection, can potentially extract training data from models. But the effort to protect sensitive organizational data from these models is not one-dimensional. Businesses take extensive measures — both cultural and technical — to protect information.

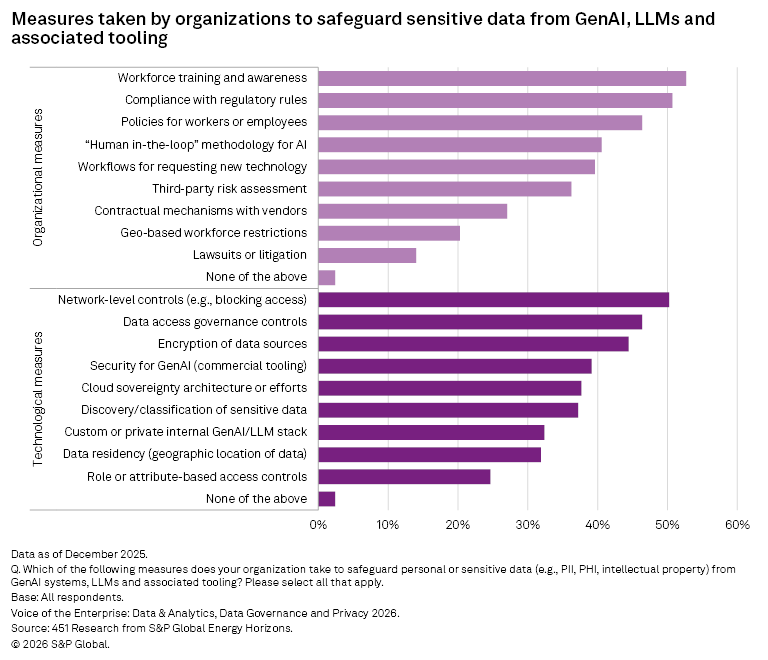

The aggregate survey responses suggest a trend — that organizations are pursuing a wide array of tactics to protect their own data from leaking into GenAI tooling and models. Both cultural and technical measures are being actively pursued at nearly equal rates across the board. However, some differences emerge based on the geographic scope of company operations, as we discuss in a recent Voice of the Customer Report. Multinational organizations, with a broader global footprint, are more likely to pursue technical controls for their data versus their local and nationally operating peers. They are also much more likely to engage in contractual mechanisms to limit the scope of data collection and training from their vendors (40% for multinationals versus 27% for local and national firms).

Technological controls are theoretically available to any organization, for the right price and the right investment of time and resources. Cultural controls, however, can be a bit more evasive. When working with large tech incumbents or highly funded AI firms, it is multinational organizations that maintain the legal muscle to be able to negotiate contracts in their favor and respond — swiftly and appropriately — when terms are violated. The proportion of data protection mechanisms that rely on legal action alone is not insignificant at the enterprise level.

Privacy for me, but not for thee?

While organizations and businesses apparently have ample motivation to keep their sensitive data protected from LLMs, consumers may not have that same luxury or choice. While it is true that some models can be installed and run locally — limiting the risk of information "leakage" — doing so requires a certain level of technical skill that many average consumers lack. As such, much consumer interaction with LLMs and GenAI is done via a web or app interface, allowing for a much greater range of information to be collected and potentially fed back into models for training.

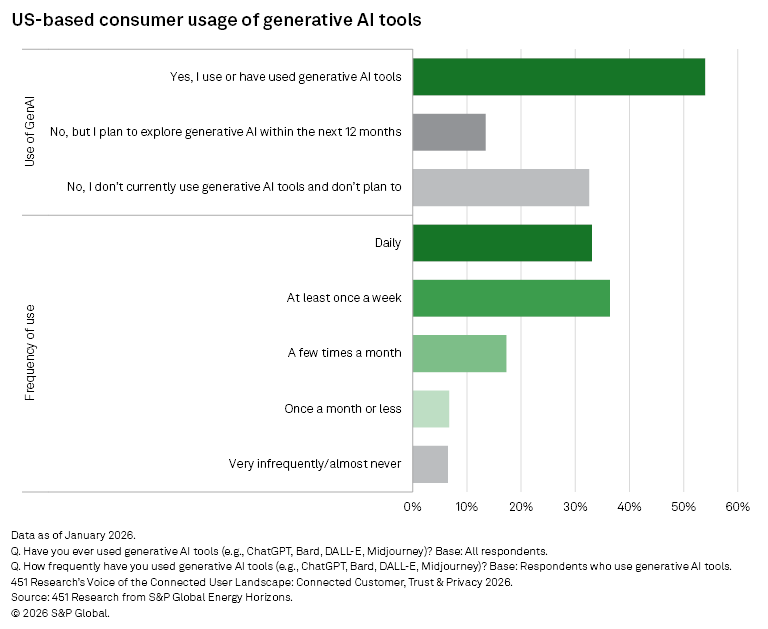

Consumers are eager to use GenAI, yet wary of privacy threats and consequences. Based on 451 Research's Voice of the Connected User Landscape: Connected Customer, Trust & Privacy 2026 survey, 54% of US consumers in a population-representative sample indicate that they have already used generative AI tools. Among those users, 33.1% report using GenAI tools daily.

However, when consumers were asked about concerns regarding the future of GenAI, the overall top response was "risks to my data privacy" at 51.3%. Among over a dozen options, this concern about privacy outpaced concerns about fraud or scams, and even the concern about the potential to replace jobs. US-based consumers are also wary of new experiences that require sharing of personal data, with 77.6% either "somewhat" or "very" concerned when trying digital experiences or using digital products that require sharing personal data online.

This suggests that consumers are eager to adopt and use GenAI but are worried about privacy implications. Perhaps they have every right to be concerned.

Click 'accept' and carry on

We have written about the role that consent and preference practices play online, as well as their potential future. Consent is essentially meaningless if consumers or product users do not fully understand what they are consenting to, yet modern terms of service (including privacy policies) are often sufficiently dense and sprawling that it would be unreasonable to think that every consumer would have the chance to read them all, or even understand the legal implications.

That doesn't stop businesses, especially in the US, from using this exact mechanism for consent in the absence of strong federal privacy or consumer protection law. Comparing the current (March 2026) terms of service and privacy policies of Anthropic PBC and OpenAI LLC for their consumer-grade offerings, the result is clear: All prompts and responses are collected by both companies, and models are trained by default on the consumer's activity data unless that consumer goes through the required steps to opt out. The retention period for this consumer data is typically 30 days on the back end, but can be much longer if the user has opted in or there is a legal hold on the content. Both companies collect and store metadata associated with the user sessions. Both companies allow for data sharing with affiliates and related entities, as well as for compliance with legal or law enforcement requirements.

As enterprises increasingly use legal negotiation and technical measures to "lock down" sensitive and proprietary data, foundation model providers appear to be turning more toward consumer interactions and data for further training these massive models. Even Anthropic, which originally did not use consumer data for training by default, started to require users to opt out in late 2025. Other examples online are rife, as when LinkedIn Corp. (owned by Microsoft Corp.) started to use user posts and content for model training by default in November 2025, unless the user went deep into the platform settings to opt out.

Many consumer plans for access to generative AI tools are paid subscriptions, yet that does not stop data from being collected and used for training in models. The old tech adage was that, "If it's free, you are the product." Today, consumers are increasingly the product and source of data, whether they pay for a GenAI service or not.

This article was published by S&P Global Market Intelligence and not by S&P Global Ratings, which is a separately managed division of S&P Global.

Content Type

Language