Featured Topics

Featured Products

Events

S&P Global Offerings

Featured Topics

Featured Products

Events

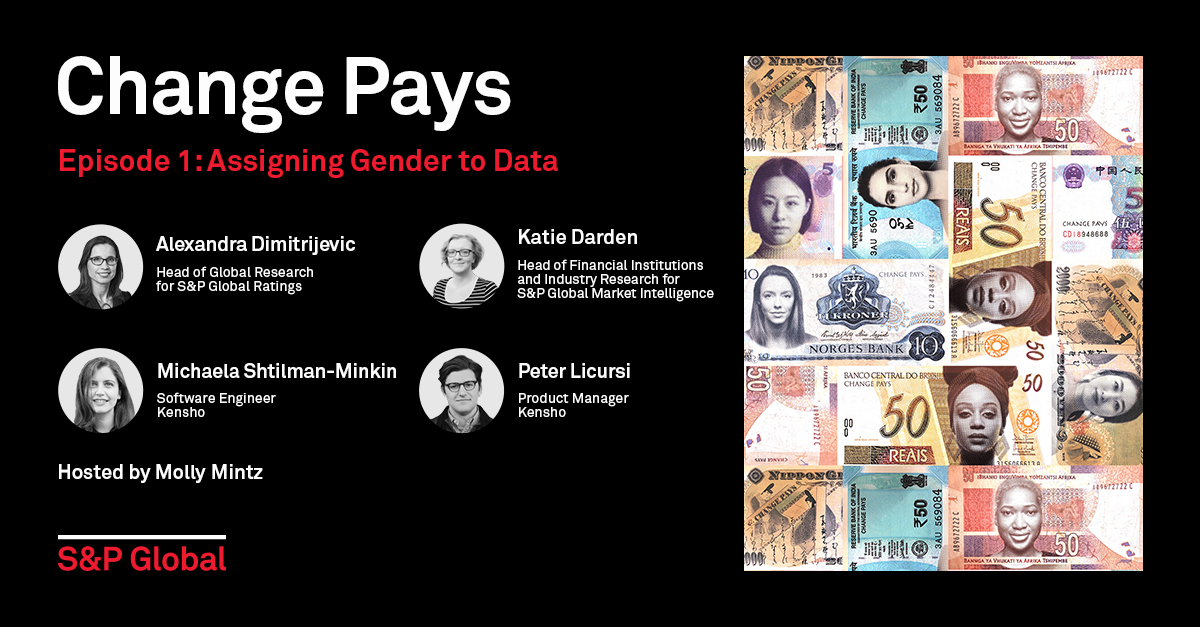

Podcast — 1 Dec, 2019

By Molly Mintz

What are the impacts of assigning gender to data? In this episode, Alexandra Dimitrijevic, S&P Global Ratings Head of Global Research and the founder of S&P Global’s Women’s Research Council, discusses how assigning gender to data can expand ESG research. Katie Darden, Head of Financial Institutions Industry Research for S&P Global Market Intelligence explains how she built an algorithm in Excel that assigns gender to a database of S&P 500 CEOs. Software engineer Michaela Shtilman-Minkin and product manager Peter Licursi, both at Kensho, part of S&P Global, share how they are advancing Katie’s original tool as a potential product that can assign gender to data with a high probability of effectiveness.

From S&P Global, Change Pays is a monthly podcast exploring gender equality and inclusivity in the global economy, workplace, and markets. Host Molly Mintz interviews influential leaders from S&P Global and decision-makers from companies and countries around the globe to continue the conversation about what it means to create inclusive economies and accelerate progress.

Listen and subscribe to this podcast on Apple Podcasts, Spotify, and Google Play.

Learn more about the topics referenced in this episode:

Introduction: To me, Change Pays means empowering women around the world to become confident and impactful leaders. Change Pays is about creating a more inclusive workplace to advocating gender equality. Change Pays for me is something that combines the power of research to reinforce the importance of an inclusive and diverse workforce. Change Pays means finally an opportunity and a platform for our collective voices to be heard.

Molly Mintz: From S&P Global, this is Change Pays, a new monthly podcast exploring inclusivity and gender equality in the global economy, workplace, and markets. I'm Molly Mintz. At the beginning of every month, I'll talk with leaders from S&P Global and with decision-makers from companies and governments around the globe to continue the conversation about what it means to create inclusive economies and accelerate progress. This month: What are the impacts of assigning gender to data? First Alexandra Dimitrijevic, S&P Global Ratings' Head of Global Research, and the founder of S&P Global's Women's Research Council, discusses the sensitivities associated with assigning gender to large databases and the impact doing this can have on ESG research.

Alexandra Dimitrijevic: This type of data on gender diversity is really one of the many indicators or many data points that more and more participants in the market are going to want to look at to see how companies are doing in terms of diversity for their workforce. And, obviously, gender is just one form of diversity.

Molly Mintz: Alongside Katie Darden, Head of Financial Institutions Industry Research for S&P Global Market Intelligence, who explains how she built an algorithm in Excel that assigns gender to a database of S&P 500 CEOs.

Katie Darden: An escapable tension here is that we are in a way lumping human beings into categories that pertain to a really personal aspect of their identities.

Molly Mintz: Later in the episode, software engineer Michaela Shtilman-Minkin and product manager Peter Licursi, both from Kensho, part of S&P Global, discuss how they are advancing Katie's original tool as a potential product that can assign gender to data with a high probability of effectiveness.

Michaela Shtilman-Minkin: I decided to make a few improvements, and the first step I did was rewrite Katie's logic in Python.

Peter Licursi: Fresh off the bat, you're seeing a tool that performs at 94%. That's pretty good, even though the data set is not a humongous one. What impressed me the most was strategically Michaela not trying to kind of reinvent the wheel, but instead kind of get into the logic of the program that Katie created and making the changes that would just make it easier to implement down the line and so that we could potentially consider kind of expanding it.

Molly Mintz: Let's get started. Alexandra, what led you to create the Women's Research Council?

Alexandra Dimitrijevic: S&P Global has published very interesting research at the macro level investigating all the ways that diversity can benefit not only companies but the broader economy. And getting to read and understand all of this research, and also talking about this research to participants in the markets and public policy bodies, I came to the realization that we could leverage even further on all of the data and expertise and insight that S&P Global has through our different divisions. We've been looking at ways where we could go deeper in this analysis. Looking at data at the company level, breaking down the data by geographies, going beyond the U.S. to more granular study around the world, looking at also the data by industry. The way to do that has been to create this Women's Research Council, which is really a task force that brings in our experts across the divisions who really want to make a difference and try to advance the debate in the world around the benefit of gender diversity by bringing in more data and insights that can then help influence decisions.

Molly Mintz: What has the Women's Research Council accomplished thus far?

Alexandra Dimitrijevic: The first research that we have published under the Women's Research Council was in September, and this was a research on women in the energy sector in support for a very big conference, APPEC conference, in Asia. We've really tried to combine a set of more quantitative analysis, looking at female participation at the C-suite level, at the board level, for the different subsegments of the energy industry. And Katie can tell you a lot more about how we got to obtain that data. We've looked at trends by region over time, seeing that actually the number of women in senior management and the boards in companies of the S&P Global BMI Energy and Utility Indices has more than doubled over the past two decades, and it's approaching now 15% so we've had some really good quantitative results from that. But we've also done a survey of the largest companies that operate in that space and asking them, you know, what their companies are doing on the topic and how optimistic they are or not in terms of future strengths. And here we had the good news that 60% of the industry executives that we interviewed were optimistic about the trend and the change. So it's really a combination of quantitative data work, but also applying our insights and our knowledge of a lot of corporate companies in the world.

Molly Mintz: Katie, you're a member of the Women's Research Council, so tell me: How have you applied the skills of your everyday work conducting research on the banking, insurance, and financial technology sectors to your work assigning gender to data for the Council?

Katie Darden: There are lots of different ways that you could go through the process of assigning gender to people, to individuals, in the dataset. Of course, gender is not something that is disclosed as such and for good reason. Why would it be? There are clues in the data that can help us determine the most likely gender for an individual. So I would just like to back up a moment and tell you a little bit more about the people data that's available through the S&P Global Market Intelligence Platform. We have teams that collect lots of data on individuals across the world. These are typically board members, company officers, you know, high level — from the C-suite, CEO, COOs, et cetera — to other senior professionals within the company. These are people who are typically named in public documents such as company filings, press releases, people who are listed on websites, all sorts of publicly available sources. Our platform collects that data and makes it easily available to researchers who want to access it. Some of the characteristics of that data would include, obviously, names; the company or other entity that people are affiliated with; their title. There's often biographical information, so maybe a paragraph or two or a little bit more about their background, and the biographical data contains some really valuable clues about what their gender might be. So, for example, a biographical data field might include an honorific or courtesy title, such as Mr., Ms., Mrs., et cetera. It would also likely include pronouns — gender pronouns — such as he, she, they, etc. And so we tap into those clues to help us make a determination about what that individual's most likely gender is.

Molly Mintz: How does this type of data collection align with the GDPR? That is, Europe's General Data Protection Regulation?

Katie Darden: An inescapable tension here is that we are, in a way, lumping human beings into categories that pertain to a really personal aspect of their identities, but we are doing it based on publicly available information, and we're only publishing the data in aggregate. So we are not publishing an individual's gender identity. We are making inferences based on publicly available data characteristics and then using that to compile aggregate analysis of trends across industries, geographies, et cetera.

Molly Mintz: What challenges did you face in assigning gender to your data?

Katie Darden: We don't know necessarily how people would identify themselves, but we can presume certain things. So, if a person's biographical data on a website includes the honorific, you know, Mr. or Ms., then we can, I think, make a reasonable assumption that they would identify with the gender most commonly associated with that honorific, with that title. Or if they, you know, have approved the use of the pronoun he or she in that data, then we presume that they are likely to identify with the gender most commonly associated with those pronouns. At first, I thought it would be best to use just two categories, basically male and non-male. My rationale was that I guess men have historically dominated positions of power and politics, business, other realms, while women and people who identify as neither male nor female have struggled to be recognized as equally competent or deserving of power or deserving of opportunities. So it made sense to me at first to think about just those two categories of male and powerful versus non-male and less powerful. And I thought having women and non-binary or gender neutral individuals lumped into one category could theoretically help us achieve a bigger sample size of non-males. Fortunately, S&P Global's People organization suggested a different approach. First, they made this important point that self-identification really needs to be the most important consideration in gender categorization. Non-binary individuals might not agree that a division into male and everyone else is acceptable since it's, you know, binary. So I ultimately built in ways in the algorithm that I developed in Excel to highlight any individuals who, based on an honorific such as Mx., spelled MX, or who have the pronoun they in their biographical data, the algorithm highlights that individual as someone who the researcher should really verify, if they can, whether that individual should be in a non-binary category or whether there are other clues in the data that can lead to a different conclusion. It's about being sensitive to the simple fact that gender is not a simple matter of male versus female.

Molly Mintz: How did your relationship or feelings with the data change after you derived gender from your database?

Katie Darden: I don't know that I felt any different. However, I am very conscious that we're talking about people. We're talking about human beings and everybody has a story. And I think that either reading the biographical description of a company's board member or CEO or other professional is maybe, you know, not always the most riveting reading in the world, but you just become aware that this is somebody who really achieved a position that they are presumably proud of. And, you know what we are interested in interrogating through the research that Alexandra described is: How do people get to these positions? What are the factors that have to fall into place for someone to become the CEO of a S&P 500 company, or to be looked at as a good board member for a different company? What does the confluence of factors that has to happen for people to get to where they are? And how does gender play a role in who is deemed worthy or adequately prepared for these roles?

Molly Mintz: What steps did you take to build your algorithm to assign gender to your data, and how does this algorithm work?

Katie Darden: I've done this before for other projects that I've worked on, manually. I think I learned the hard way that that is tedious and time consuming, and so I started to look for ways to make it go faster. I built an algorithm in Excel using VBA, which is the internal programming language used in various Microsoft applications. This template uses VBA functions to assign a gender classification code to individuals in a given dataset. So to do this, it first assigns three gender codes based on three pieces of data. So you have, first, honorifics such as Mr., Mrs., Ms., or Miss, and then a code based on pronouns — gender pronouns — that are showing up in individual's biographical data field, and then a code based on their first name if that's something we can determine. The algorithm searches for honorifics and pronouns in people's biographical descriptions, if we have those descriptions available for an individual in our data. We do have that in our Market Intelligence people data for quite a lot of the individuals and the database. If the algorithm signs the titles Mr., Ms. or Mrs., it indicates what that person's gender is likely to be based on that honorific. So, of course, Mr. signifies male; Ms. and Mrs. signify female. If there's no honorific in the data we have that person, or if there are conflicting male and female honorifics in the biographical description, then the algorithm is pretty cautious. It doesn't make a gender determination based on the honorific. Now, if the biographical data contains the pronoun Mx, spelled MX, then that algorithm says, you know, this might be a case of non-binary gender identity. You might want to check this out further. So, it basically includes a different code that will signal to the researcher, you need to look into this more. The template follows a similar logic when it comes to pronouns. "He" signifies male, "she" signifies female. If the tool finds the pronoun "they" in the biographical data, again it enters a code that tells the researcher, look into this one more; you need to figure out what's going on here. It's important to note that the algorithm does err on the side of flagging possibly non-binary identities, whether that comes up through honorifics or pronouns, so that the researcher can check more closely. We try to be cautious in flagging cases that need some further verification. The template also takes the individual's first name from the data and it searches for it in two lists of common first names that I compiled from public sources and classified according to whether they are male or female. I was really careful with these lists to exclude gender neutral names. I leaned heavily on, you know, some third-party sources to identify whether certain names were male, female, or gender neutral. Finally, the template determines the individuals most likely gender based on those three gender codes determined by honorifics pronouns and gender. It prioritizes the honorific-based gender determination on the assumption that if a person identifies as Mr., Ms., Mrs., or Mx, they are likely to identify with the gender category commonly associated with that title.

Molly Mintz: What happens if the algorithm cannot determine what gender to assign to each entry?

Katie Darden: If we don't have a conclusive gender determination based on honorific, the template then turns to the pronoun-based gender determination to make the final call. If it's not possible to confidently categorize an individual based on either their title or their pronouns. The template uses the name-based gender determination to make that final call.

Molly Mintz: Alexandra, what was your perception of this process as Katie was undertaking this effort? Did it feel like a breakthrough in what you had envisioned for the Women's Research Council, or were you waiting to see what would come from this?

Alexandra Dimitrijevic: In a world where ESG — environmental, social, and governance factors — has become so critical for a number of businesses, for investors in their decisions, for governments, this type of data on gender diversity is really one of the many indicators or many data points that more and more participants in the market are going to want to look at to see how companies are doing in terms of diversity for their workforce. And obviously gender is just one form of diversity. There are a lot of other forms of diversities which are equally important and maybe even more difficult to measure. So, the interest in this exercise was to say, well, first of all, this isn't data that is yet available per se on our platforms, but this is the data that we believe with the use of algorithm or artificial intelligence we could generate.

Molly Mintz: Katie, do you share this vision? Are you planning on developing this work further?

Katie Darden: We are taking steps to make that tool even more robust in collaboration with some of our colleagues from Kensho. Our colleagues from Kensho have been working on a tool that uses, you know, much of the logic from my Excel-based template and goes a step further with more robust name analysis to come up with a really strong tool built in Python, and then hits an API that specializes in name-based gender categorization.

Alexandra Dimitrijevic: Going forward, what we are trying to do, as Katie was saying, with Kensho, who has huge AI capabilities, is to build an AI tool that we could use then across all of the divisions, including for the work that S&P is doing on ESG. I guess what this is getting at is, Why are we doing all this work? Why are we trying to obtain this data? Why are we trying to understand the implication that greater female participation overall in the workforce, but even more at the senior level, what does it matter? And it's really then trying to understand and be able to relate or correlate some of this information with some forms of company performance.

Molly Mintz: Going forward, will all of S&P Global's data where applicable be assigned gender?

Katie Darden: That's a great question. I don't know that I can answer it, and I don't know that it's, you know, is it ultimately something that belongs in the data that's available to clients? I really can't answer that, but I do think that it is important, as Alexandra has said, to ask these questions about female representation, you know, versus other genders' representation in the workforce overall, in companies' leadership ranks. I mentioned earlier a point of tension of, you know, lumping people into categories based on a really personal aspect of their identities, but I hope, and I mean, I like to think that that tension is ultimately outweighed by the value of this research and promoting equality and pushing readers and other people who are interested in this space and even others to think critically about gender and really about any characteristic or category that knowingly or unknowingly influences the distribution of power in our society. With the small but hopefully growing sample size of historically marginalized people into these positions of power, I hope that we'll just have even more data to work with and to analyze.

Alexandra Dimitrijevic: Gender diversity is one of the data that matters in ESG factors, so all the work that the Women's Research Council has been doing could have benefits for all of this work that S&P Global is doing on ESG. As a company, what our role is and what we're trained to do is really provide the essential intelligence — which is the data, the insight, the benchmarks — to participants in the market to inform their decision, help them make decisions with conviction. And we see a larger number of market participants who want to include in their decision-making process some of these factors around ESG, but we also see on women diversity in particular, the lack of data that might be available at a sufficiently granular level by industry, by country, that could really help inform the decision and could really contribute to maybe a change in some of the previous patterns of investment decisions.

Molly Mintz: As I see it, having gender as this additional data point could be beneficial for investors even if they don't utilize it for every investment decision. At least it's there as an option for investors who want to consider this type of social factor or are interested in ESG. It also introduces equality into the equation on a consistent basis, but that's just me. How do you see the story or the analysis or the insight change when gender is included in the conversation?

Alexandra Dimitrijevic: Every investor might have, you know, different factors that influence their decision and to the extent that they would like to use this type of information to influence their investment decisions, having the data available is helpful to them, but also being able to advance a debate — meaning doing original new research that actually can demonstrate a link between female participation at the senior levels, C-suite or board level, and some company performance or investment performance, this is a type of research that would also bring additional insight to market participants. And we have seen an example here in the U.K. where the government has started to require that companies from a certain size disclose information on their gender pay gap. Just by the process of asking this disclosure, first of all, it requires that company to collect the data, measure the gap, and then disclose it. We found that it's a very powerful process because then by collecting and seeing the data, then you can decide what you want to do and inform your strategy or your decision. If you don't have that data, it's a lot harder.

Molly Mintz: What comes next for the Women's Research Council?

Alexandra Dimitrijevic: Another topic that we are looking to investigate is more around the importance of STEM education, particularly in a context of the fourth industrial revolution and the transformation of jobs that we all know is going to really accelerate in the next decades. We want to investigate how women are positioned in terms of their education to be taking on some of these new jobs. So, at the Women's Research Council, we are really very interested to be able to partner with other associations or organizations or companies who are working on similar topics and try to generate some of that research together. So if some of the audience listening to us today is interested, then they can get in touch.

Molly Mintz: I've been thinking a lot about the ways in which a gender data gap might persist because the majority of data does not include women or other groups. I'm interested to hear what you both have to say about this. Do you believe there is a gender data gap, and if you do, how would you define it or describe it or experience it?

Katie Darden: There certainly is and it's not limited to data around the private sector, obviously. I mean, there's whole fields of studies in history and gender studies, anthropology, you know, really smart people trying to identify where the silences are in anything from historical archives to medical data. Once you figure out where those absences are, those silences are, you can start to look for, maybe, ways to change the collection of data and, you know, the recording of history, maybe even, going forward. And you can also start to look for alternative ways to get at the information you're looking for. But ideally, you know, we can start to close that gender data gap in our own small ways through the work of some of the people on the Women's Research Council here.

Alexandra Dimitrijevic: I would say just generally in the world, there's a lot more data available with AI growing more powerful every day. There are also a lot more ways that we can work with very high quantity of that dynamic sense of the data and learn from it, which just a few years ago were not as easily available. I had mentioned at the beginning, I think obviously gender diversity is important, but diversity is a lot broader topic as well and there are also a lot of opportunities to explore getting more data on ethnicity, social backgrounds, and so on.

Katie Darden: It's hard to solve a problem if you can't quantify it and really define it. And so, I think that having this additional aspect to the data can help people really put in black and white in front of their faces, you know, this is what gender representation looks like in business, or anywhere else you might want to apply it. If you look at the numbers and you think, this does doesn't seem logical, it can make you start asking other important questions about, well, why is it this way? And then that can lead you further down the path of really asking, you know, not just what is the problem, but what is causing it, and how can we solve it?

Molly Mintz: Even within one company, researchers are leveraging different approaches to yield similar results. At Kensho, software engineer Michaela Shtilman-Minkin and product manager Peter Licursi are experimenting with more advanced technology tools to take Katie's algorithm from practice to product.

Michaela, I want to know how you fit into not only the Women's Research Council, but also can Kensho overall. Could you tell me a little bit about yourself?

Michaela Shtilman-Minkin: I've been at Kensho for about two and a half years now. I've been a software engineer the entire time here. Early on in my Kensho career, I was actually involved with our diversity and inclusion committee, which works, of course, on promoting diversity and inclusion at Kensho. And about a couple of months ago, I heard about the Women's Research Council and I became interested in that because I think it's going to help us learn a bit more about what it's like to probably be a woman in the workplace, and that's a huge part of our D&I initiative.

Molly Mintz: How did you start this project? How did the Women's Research Council approach you to bring you both on board?

Peter Licursi: We were approached a few months ago by people on the data governance group at the Women's Research Council to see if Kensho could lend a hand in performing some of the research that some of the people involved in the Women's Research Council have had been pursuing, and it seemed like an immediately excellent opportunity to collaborate. At Kensho, we really think about our goal as developing cutting edge AI and machine learning technologies that help make S&P Global the greatest data company in the world and the benchmark for data. And it's kind of with that same spirit that we've approached our collaboration with the Women's Research Council.

Molly Mintz: You're approached by the Women's Research Council, you're excited about this opportunity to apply your data analysis and learn more about women in the workplace. What did Katie do? Katie Darden came to you with her Excel spreadsheet, and what did she say?

Peter Licursi: When we first met with Katie, it became immediately clear off the bat that we could help implement some relatively straightforward engineering solutions to streamline some of the tools that she had developed. You know, from my standpoint, I knew having worked with Michaela that she would be the right person to kind of drive that problem-solving from an engineering point of view and build the software that would really make an impact for the researchers doing the work that they're doing within the WRC.

Molly Mintz: Michaela, what was your immediate reaction when you saw what Katie had created?

Michaela Shtilman-Minkin: I really liked Katie's idea and this spreadsheet she set up and I was very excited about maybe taking it a step further and making it a bit more flexible and robust.

Molly Mintz: Let's start going through the process. How exactly did you get started?

Michaela Shtilman-Minkin: Katie used two primary points of data. She used the name of a person, which could be, in this case it was the full name, but it could also be just the first name. And the second data point was their biography, if it was available. She started out by experimenting with data about S&P 500 CEOs. The methodology worked as follows: the first question was, does this person have an honorific in their biography? For example, Mrs., Ms., Mx., and if so, she designated the gender that way. Now, if an honorific couldn't be found, we go to the next option. Was this person referenced by a pronoun in their bio? For example, he, she, they. If so, we designated their gender that way. Now, if neither option worked, or for example if the bio wasn't available, Katie leveraged two lists of names, each one being classified as being either female or male. The lists excluded gender neutral names. For example, if the name Taylor appeared in either list, it would get that gender-neutral designation. I thought that worked really well. The results were pretty good. I think it was well over 90% accuracy, it might be over 93. I decided to make a few improvements and the first step I did was rewrite Katie's logic in Python, and there are a few benefits to doing that. The first one is that I was able to add more custom air handling, which meant we were better equipped to handle someone passing in bad input. For example, if somebody passed into numbers one, two, or three instead of a name for some reason. Another thing we could do is provide the user with more specific detail about what went wrong. So for example, if a user did put in number one, two or three, we could say, you were supposed to put in a name, not a number. And that way if somebody knows what they did wrong, they can immediately fix it. So it provides kind of a tighter feedback loop. And the last point, which is kind of looking more towards the future, is if we wanted to make this a formal API or a service, we now have a more extensible solution to get us started. That was the first part. The first part was rewriting this in Python. The second change we made is then to look at the last part of the classifier, which is the one that uses the two lists of names. There are a few great APIs out there that, given a name, will actually spit back a result about the gender of the name they provided. Let's look at this example. If I put in Molly through one of those, which is actually the name we used, it will say that we got a 95% probability that the name is female. Similarly, for Michaela, it will show something like 99%. I really appreciated how this didn't require us to maintain lists of names ourselves and how it had the probability included. The probability is useful because it could help us select a threshold for what qualifies to be a reasonable guess. For example, the name Taylor earlier actually returned 72% probability for male, which might not be too great for what we wanted to do.

Molly Mintz: I want to touch back to what you just said about a name like Taylor. When I hear a name like Taylor, I immediately consider it to be a gender-neutral name. It doesn't really align with the binary of a male or female or a woman or male name. The API is able to determine whether a name like Taylor is more likely to be a male name or a female name. How do you check to really see if that's the case? How do you double check to make sure that you're not assigning gender to someone who actually doesn't identify with that?

Michaela Shtilman-Minkin: The best way for us to go ahead there is to do some more manual verification and talking to that person. Right, because they API is not perfect. It's based on data that's available out there. The API took as many people as they could though, who are named Taylor, for example, and found out that 28% of them are women. So there isn't really a great way to know other than that at that point.

Molly Mintz: So now you've built this script, you're testing out its capabilities. What's the difference in probability of the likelihood of assigning the correct and appropriate gender now with this new Python script compared to what Katie's Excel spreadsheet had been able to do?

Michaela Shtilman-Minkin: The one verified dataset that we used is the S&P 500 dataset, which Katie has access to. I believe that for using the Python script, it was effectively 100% whereas with the Excel spreadsheet, it was around 93%.

Molly Mintz: What were the biggest challenges that you came across in doing this?

Michaela Shtilman-Minkin: I think one of the bigger challenges is that it's not exactly what I do for my day to day job. A lot of the work I do is more on the infrastructure engineering side rather than dealing with data. This was a bit of challenging and a bit of fun to work with something like data, which I don't get to actually touch day to day.

Molly Mintz: Did your perception or did anything change in your mind about women in the workplace now that you've seen this data and worked with it and you've kind of gone on a journey with it from beginning to end?

Michaela Shtilman-Minkin: Being a woman in the workplace, and being on the diversity and inclusion committee here, I'm already a little painfully aware of how few women there are as S&P 500 CEOs. So it kind of confirmed that a bit, but I'm very excited to actually have this data be officially available now. And it'd be pretty cool if we could extend this to even bigger datasets and look to kind of get a broader view of the industry and what's going on.

Molly Mintz: I think it's interesting to explore whether all data sets should have gender included as a characteristic. Do you think that that's something that all researchers should start taking into consideration?

Michaela Shtilman-Minkin: I think there is a lack of including gender in research overall. Most recently, I've been reading a bit more about how much it's lacking from pharmaceutical studies. When a lot of researchers do studies for a new drug, they don't necessarily always include all gender or even ethnicities, so that's effectively 50% of the population going untested on whether or not the drug works well for them.

Molly Mintz: Peter, when seeing Michaela undertake this process, what did you learn?

Peter Licursi: One of the things that was really impressive was just to kind of observe as Michaela really took apart what was already a really excellent product that Katie had created, and to problem-solve the really individual ways and to identify the specific pain points and, you know, get at the elements where we could make the biggest impacts based on Michaela's skillset. It was really cool to observe Michaela dive into this problem and really laser in on specific areas that could use improvement. It's just a lot of creative thinking around what I think most of us, particularly those of us without an engineering background, might not pinpoint as, you know, a specific area to create efficiency.

Molly Mintz: Give me a few examples of that.

Peter Licursi: Fresh off the bat, you're seeing a tool that performs at 94%. That's pretty good, even though the data set is not a humongous one. What impressed me the most was strategically Michaela not trying to reinvent the wheel, but instead kind of get into the logic of the program that Katie created and making the changes that from a kind of structural standpoint would just make it easier to implement down the line and make it more robust down the line so that we could potentially consider kind of expanding it.

Molly Mintz: Michaela, moving forward, as you both are considering expanding this, what you think you can improve on or what do you need to find tune in order for this to become a serviceable product?

Michaela Shtilman-Minkin: I think one of the biggest thing you'll find is kind of general infrastructure around service. Right now, we're running all of this as a script in a Python notebook, which is kind of an environment where you can very quickly develop a bit of Python code. I think that in order for more people to use it, we definitely have to scale it up to fully fledged service and provide some official end points for people to hit.

Molly Mintz: [And Peter, what next steps are you taking to explore the potential product behind this?

Peter Licursi: We're looking to see essentially how to make this the most useful for the members of the Women's Research Council. They are going to use this data in a certain way and we want to make sure that we can help tailor whatever we're creating to their needs.

Molly Mintz: What do you really see this script being the most useful for?

Michaela Shtilman-Minkin: The script as it stands right now is fairly generic, right? It takes in a name and an optional biography. One idea would be to open source something like this, which means that anybody could pretty much use this as a package. If they wanted to classify a name somewhere and then people could use it in whatever research field they'd be interested in.

Molly Mintz: Looking at a big scale picture, surely across the world, across industries, and at companies like Kensho and across S& P Global, different researchers are taking their own approaches to assign gender to data. Of all the things that you've learn and all the takeaways that you shared, for anyone that is just starting this effort — maybe they have a preexisting tool like you both did to work with, maybe they're starting from scratch — what would you tell them?

Michaela Shtilman-Minkin: I think one of the biggest things to keep in mind is that our data mostly works for American names right now. So if you wanted to take this to a more global scale, we might not be able to identify the gender of a name from another country, right? Or even the same name might actually be normally assigned to a different gender than another. For example, Andrea in the United States is commonly known as a woman's name. However, if you looked at a country like Italy, perhaps you'll have Andrea Bocelli, who is actually a man, and that name is usually assigned to men.

Molly Mintz: Being aware of the sensitivities regionally and globally is definitely an important takeaway.

Peter Licursi: The first API that we decided to use is focused on names that would be kind of more focused on Anglophone countries, but we could identify other APIs that would address those issues. One of the things that's really impressed me, just kind of observing the way that the Council works, is the way that there's a real kind of collaborative process of challenging people's perceptions of data and making sure that when you look at a piece of data, you understand that you're looking at it through your eyes. What's really important is making sure that you consider multiple perspectives when approaching a dataset, particularly when you're handling something as personal as gender identity.

Molly Mintz: I mean, when you think of how many people interact with different data and how everyone is counted in a data set, it's so important that the ways that we identify in the world are reflected in the data sets that define all of our actions. I would love to talk and hear your thoughts about reflecting non-binary identities in your work.

Michaela Shtilman-Minkin: We did our best to detect non-binary folks through the use of honorifics, such as Mx and the pronoun they, should they be available in the bio. Unfortunately, it is practically impossible to infer this from a name. And furthermore, I agree with Katie that it's probably not great to put people into buckets, right? From a holistic standpoint, this tool can be useful for fueling research efforts and monitoring general trends. But, of course, it's not precise and we should be very, very considerate of how we use it. I hope we can improve the tool further in the future and take some time to explore being even more inclusive in our approach.

Molly Mintz: Would enforcing that inclusivity be in the basis of the API to make sure that you have an API that represents and includes a variety of genders? Would that be one avenue to explore?

Michaela Shtilman-Minkin: I'm not sure if the API we leverage for our tool right now is capable of doing so, but perhaps we can do more things on our end outside of what the API is capable of doing to be a bit more careful with our output.

Molly Mintz: Having explored this data and created this project, how will you know and how will we know that we've reached gender equality? When you're looking at the data, what will we see and what will we recognize as this milestone of finally reaching parity?

Peter Licursi: Data provides you with information, right? It's just information that's an input that you can use to make analytical decisions. And so as far as I'm concerned, the more data that we can collect and the more analysis and research that we can perform on that data, the better and clearer picture we're going to get about those issues. I'm not sure about prescriptive solutions, but I do know that the work of making sure that we pursue this information and scrutinize and analyze it is certainly a worthwhile initiative.

Molly Mintz: Michaela, what are your thoughts on this? When will we know that we've reached gender equality?

Michaela Shtilman-Minkin: Being a 50/50 breakdown or just the general gender balance that is more reflective of the overall population, I think that's when you know you've come pretty close, but I think it's a bit of a finicky subject and you're never gonna know when you're quite there.

Molly Mintz: Why do you think that's the case?

Michaela Shtilman-Minkin: It's very difficult to look at data and say, we've arrived at equality. Right, because data is just about the gender of the person. Whether or not they're on the S&P 500 CEO list is just one metric, it's just one slice, but there's a lot of other ways you can look at a person and compare them to other people and see if they have the same sort of standing, because people are a lot more complicated. Even if we had a thousand different pieces of information about every given person, it's very hard to say, Oh, they're equal because of X, Y, and Z.

Molly Mintz: Across the board, if 50% of S&P 500 companies had female CEOs, for one person and one metric that may mean equality, but if those female CEOs were still paid dramatically less than their male counterparts, then perhaps we have not reached equality.

Michaela Shtilman-Minkin: Right. Another way you could look at this is you could maybe look at data and see that everybody at the company gets the same amount of vacation, but for example, you could have men not get any maternity leave and women getting 10 weeks.

Molly Mintz: Thank you for listening to the first episode of S&P Global's Change Pays podcast. Thank you to Alexandra, Katie, Michaela, and Peter for talking with me. Subscribe to this show on Apple Podcasts and Spotify, share your feedback on social media with the hashtag #ChangePays, and listen to new episodes at the beginning of every month everywhere podcasts are played. To read the research we discussed in this episode, visit SPglobal.com/changepays. Talk to you next time.